Opinionated Infrastructure-as-code Project Architecture

A quick introduction of best practices to properly start an infrastructure as code project.

Infrastructure as Code (IaC) has been a trend for many years. While some standards are being defined today, we continue to hear about new methods or tools to improve and make our lives easier by automating our daily tasks as much as possible.

Fortunately or unfortunately, today, we have the choice between multiple automation tools like Ansible, Pulumi, Terraform, etc, each one having their own benefits and disadvantages. Thus, choosing the right tool is not the easiest part. It requires a team collaboration to identify, test and define the right tool. This collaboration is the key to success, it is important to ensure the engagement of the other teams to the IaC project to properly automate the process of each team.

Choosing the right tool is a challenge, but defining the architecture of the project is another one. Apart from the war of mono or multi repository, the focus in the definition of an IaC project architecture should still be the collaboration. The purpose should be to aim for simplicity to facilitate engagement.

The purpose of this post is to present an opinionated infrastructure-as-code project architecture used multiple times with different automation tools in different environments. But, let’s start with a quick introduction to what an IaC project is.

What is an infrastructure-as-code project?

Infrastructure as Code represents the management of any infrastructure component (Cloud architecture, networks, virtual or physical servers, load balancers, etc) in a descriptive model. Based on the practices of software development, it emphasizes consistent, repeatable routines for provisioning and changing systems and their configuration. Like the principle that the same source code generates the same binary, an IaC model generates the same environment every time it is applied. IaC is a key DevOps practice.

Basically, that means IaC is a methodology of provision, configuring and managing an IT infrastructure through machine-readable definition files to easily version the state of an entire infrastructure. This methodology is not new, it has been improved over years and continues to be improved today. An IaC project could potentially have no end since it is obviously linked to the business requirements of the company.

There are multiple benefits of an IaC project, the main ones are:

- Improving the velocity, it makes the software development life cycle more efficient by allowing someone to quickly set up a complete infrastructure.

- Improving the consistency, humans are fallible, automating manual processes avoid many mistakes. Automation is the key of success to consistency in reproducibility.

- Improving the cost, coupled with a good cloud computing, IaC can dramatically reduce the cost of the infrastructure management. Money spent on hardware, operators, physical resources all add up but with IaC, automation strategies frees engineering from performing manual, slow and error-prone tasks to focus on what matters the most.

Based on this, IaC coupled to the right tool and an appropriate project architecture can be powerful for every engineer in a company. Automation (and IaC) is not reserved to the DevOps team, so when it is time for you to make some choice, have in mind the entire infrastructure to not only improve your daily tasks but also the tasks of your colleagues. Keep in mind that collaboration is the key to success!

How to start an infrastructure-as-code project?

This is an opinionated architecture that proved its efficiency in multiple contexts. It has obviously been improved over iteration and can probably be improved again. Keep in mind that an IaC project cannot be defined correctly from the first time, it requires several iterations to adapt to the company’s work methodology.

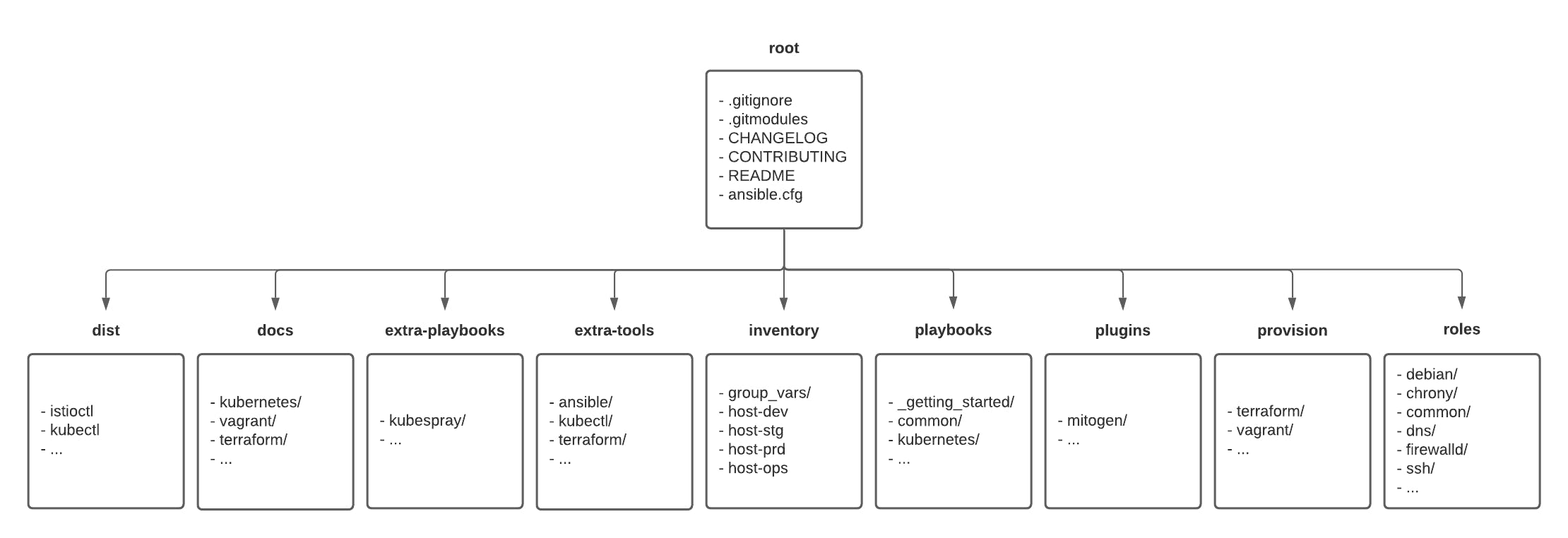

Here is a quick explanation of the directory architecture:

- Root is the entrypoint of the project, it contains the main documentation for getting started with the project (README, CONTRIBUTION files) as well as the CHANGELOG file to keep track of every update.

- Dist is an optional folder created automatically by an automation script to configure the local environment to easily on-board anyone in the project. This is mainly used to centralize symlink to binaries stored in the “extra-tools” folder and used by the IaC project.

- Docs is where more documentation on the project can be found. The idea is to centralize the documentation with the code source to version both of them and keep them in sync.

- Extra-playbooks is a folder where external playbooks can be downloaded automatically. The idea is to separate internal and external resources to keep a clear understanding of what piece of code can or cannot be updated.

- Extra-tools is a folder with the binaries of each tool used to manage the IaC architecture. This is useful to have it locally in case they would be needed by any role to run any action.

- Inventory is the location of the global information shared by the automation tools, like the inventory of each resource in the different environments.

- Playbooks is the location of the internal playbooks developed by the engineering team.

- Plugins is an optional folder created automatically by an automation script to configure the local automation tool to easily extend their functionalities.

- Provision is the location of the automation code used to provision the infrastructure. It can be the Cloud or on-premise (Terraform, Pulumi, etc) resource as well as the local testing environment (Vagrant, Docker, Kubernetes). This folder is divided in sub-folder (one by tool) to easily identify which tool managed the provisioning.

- Roles is the location of the different roles used by the playbooks to configure the resources provisioned.

This directory architecture can be used to logically separate the provisioning code from the configuration code without having to manage multiple repositories which, in some cases, can be a benefit.

That way, a full automation can be easily handled by the same project, like the provisioning of a virtual machine with Terraform and automatically configuring it with a local provisioner like Ansible.

How to collaborate as a team?

Collaboration is the key to success, like a great proverb says: “Alone we go faster, together we go further!”. Developing an IaC project as a team avoids someone developing an architecture that nobody else can understand, and also avoids choosing the wrong automation tools. Keep in mind that an IaC project should be used by anyone in the engineering team of a company to automate their processes. The main goal of the DevOps methodology is to reduce the gap between the operation and the developers. IaC can help, but to achieve that, the project requires involvement from everybody.

Obviously, an IaC developed with different teams requires project management to divide it in roadmap, tasks, sub-tasks and to follow the progression over time. This post doesn’t aim to explain how a project should be managed, but what teams can do to collaborate in an easier way.

Version the code

This step is often forgotten by the Operation team, but keep in mind that an IaC project should be seen as any other software project. Version control is the practice of tracking and managing changes to source code over time to prevent concurrent work from conflicting. It also introduces release management to quickly rollback to a previous version when needed.

A convention has to be defined and potentially managed automatically by a continuous delivery pipeline to automatically tag a repository, a state file or a bucket.

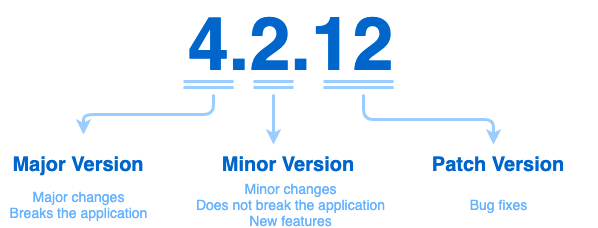

This convention can be as simple as:

In other words, the convention for an IaC project could look like that:

- MAJOR version when you introduce breaking changes like upgrading an automation tool that potentially change the behaviour of an API or require rewrite of code,

- MINOR version when you add functionality in a backwards compatible manner like adding a new role, introduce a new tool, etc,

- PATCH version when you make backwards compatible bug fixes or formatting.

Use branches with explicit names

Another best practice from the development side that an IaC project should follow, the usage of branches. Branches, in any source control software, allow people to diverge from the production version of code to fix a bug or add a feature. Basically, branches allow you to develop your changes without having any impact on production or others work.

It is highly recommended using an explicit name while creating a branch to ensure that someone else can refer to it and quickly understand if this branch is still in development or not. A best practice is to name the branch with the ticket number of the current task to quickly refer to an identifier in the project manager.

Another best practice is to maintain the main branch for the production code and a dedicated branch for each environment. That way, a workflow can be defined to first deploy every change in the development environment/branch then a staging environment/branch then releasing the changes in production/main branch.

Write explicit commit messages

Another best practice often forgotten by Operation teams is the formatting of the commit messages to ensure a good understanding of the changes.

Again, it is highly recommended defining a commit message convention to extract useful information from each update.

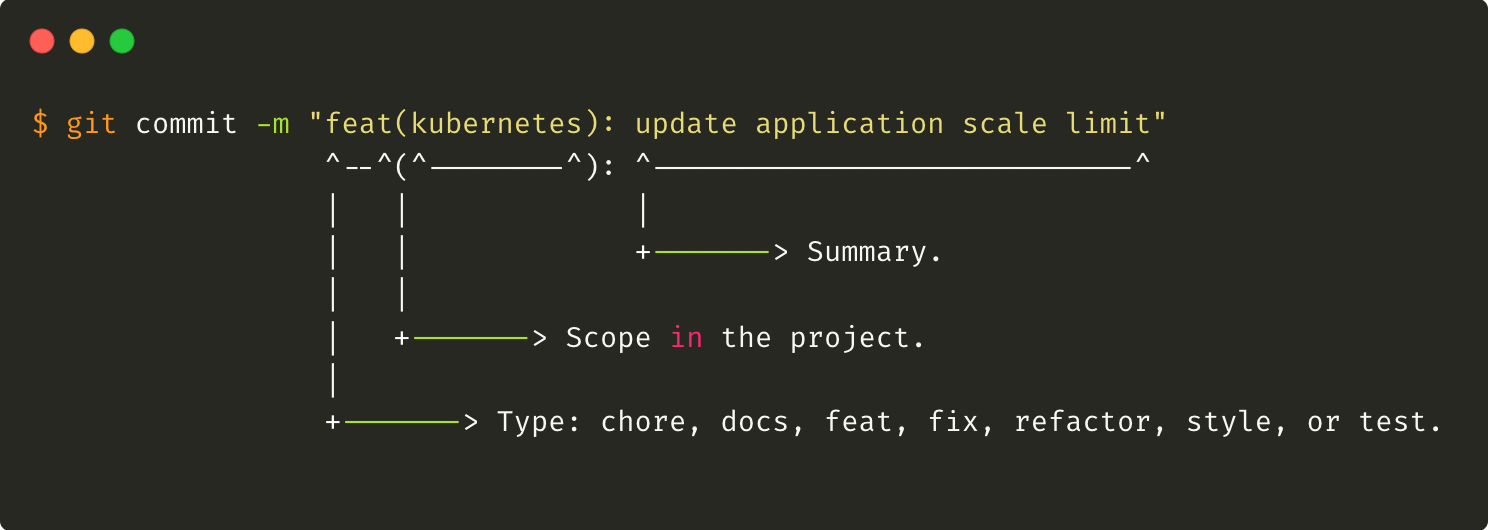

Here is an example of a commit message convention compose of multiple information that can be used to analyze a project and quickly get insight on the maturity:

In other words, the convention is composed of 3 components: a type, a scope and a summary.

The type defines the global purpose of the commit:

- docs: update of the documentation like a README file.

- feat: add new functionality to the project.

- fix: code update to fix a bug.

- refactor: code update that doesn’t introduce new features.

- format: code linting.

- test: code change on unit test.

- ci: code change on continuous integration process.

The scope defines which sub-component of the project is impacted, and the summary quickly describes in less than 80 characters the update.

The usage of a convention requires using small commits to easily find the type of commits to use. If you don’t know what pattern to use, that could mean that your commit must be divided into multiple commits.

A Github project named “git-semantic-commits” is a good introduction to automate the format of commit messages created in the command line.

To ensure that every contributor follows the format, a pre-commit rule can be easily applied by any source controller software.

Commit messages are important for the collaboration of multiple people on the same project. Used properly, it can help in many ways, specifically during bug fixes and rollback processes.

Review the code

The main important point of collaboration is the code review, a software quality assurance activity in which people check the IaC code mainly by viewing and reading parts of it, and decide after to continue or interrupt the implementation.

A basic process should require at least one approval to merge the code in the main (aka production) branch.

Facilitate project on-boarding

Collaboration means involvement and one pain point of it is probably the on-boarding process, the time required for a contributor to get the philosophy of the project, understanding the best practices and committing its first update.

This obviously depends on multiple factors, the quality of the documentation, the quality of the code and the time required to set up a local environment to start the development of an update. Setting up an environment can take time as it requires installing or updating tools, configuring them, downloading dependencies, config files, etc…

To make it easier for anyone interested to contribute to the IaC project is to take advantage of automation tools to also configure the “base” profile required by any contributor. That way, everybody can share the same local environment configuration (version of tools, dependencies, etc) and easily on-board on the project.

No waste of time on research, meetings to set up the environment with the correct version or command line execution, only one playbook to run to be ready to jump in the IaC adventure.

In the current architecture presented, this was managed by Ansible to ensure the installation of Python, Terraform, Kubectl, Helm, Mitogen, etc... all at once to easily configure multiple environments (Linux, MacOS, etc).

A quick overview of tools available

We cannot talk about infrastructure as code without mentioning tools. So here is a quick list of tools that deserve specific attention when it’s time to start an IaC project.

Provisioning with Terraform

Terraform is an open source tool developed by HashiCorp that allows DevOps engineers to programmatically provide the resources needed to run an application. Based on the HashiCorp Language (aka HCL), it allows anyone to easily maintain the state of an entire infrastructure by provisioning and re-provisioning it across multiple cloud and on-premises data centers. Thanks to different provisioners and modules, Terraform is able to manage almost every resource required by an application.

Terraform is designed to maintain up to date and share multiple states at the same time, but working as a team can become complicated over time if a DevOps process is not followed properly. Fortunately, an external tool has been developed to easily manage code review and automatic merge of any update in a Terraform project, this tool is named Atlantis. Definitely a software to use to catch errors or mistakes before pushing something in production.

Testing is an important piece of the DevOps methodology, and an IaC project is not exempt. A great tool has been developed to automate the test of Terraform resources. This project is named Terratest. This Go library, developed by Gruntwork, helps to create and automate tests for an IaC written with Terraform, Packer for IaaS providers like Amazon, Google or for a Kubernetes cluster. A good step to add to an automated pipeline.

Configuration with Ansible

Ansible is a tool that provides powerful automation for cross-platform computer support. It can be used by any IT professionals to manage application deployment, updates on workstations and servers, cloud provisioning, configuration management, and nearly anything a systems administrator does on a weekly or daily basis. Ansible, with its idempotency, has dramatically improved the scalability, consistency, and reliability of IT environments.

Ansible can be coupled or not with Terraform to easily manage the physical resources required by any application. Even though today, Terraform can do more than provisioning, it has been coupled with Ansible for a long time to manage the provisioning only while Ansible was used to configure components with more flexibility.

Like any programmatic languages, Ansible definition files must be tested and reviewed before moving to production. Two different tools can be used to automate it, Molecule and Ansible Test. Both are good and easy to use to run unit tests on Ansible playbooks to validate the behaviour before reviewing the code.

To improve Ansible performances, a great Python library, named Mitogen, can be added to Ansible to dramatically reduce the compression and the traffic generated by Ansible to run any playbooks. Highly recommended using it for an IaC project based on Ansible.

As explained in a previous section, an IaC project must be flexible to easily change, if necessary, methodology, architecture or tool. Every year, new tools are developed and used by the community and aim to compete with the best one in the market. This is the case of Pulumi, another IaC tool focused on a programmatic language approach, thus, if you are familiar with a programmatic language like Python, Go, etc, and you are about to start or refact an IaC project, take a look at the Pulumi project. It definitely deserves attention.

Run your code locally in a virtual environment

An IaC project must be seen as an application project. It must follow the best practises regarding the development of the definition file and specifically the test step. In certain cases, it is better to test an update locally before testing it in a development or testing environment.

Fortunately, multiple tools exist today to help DevOps engineers to test their code before moving it to production. Vagrant, Docker, Podman, Buildah, Minikube, Kind, MicroK8s, etc are different tools that can be used to emulate specific environments to test a piece of an IaC project.

Vagrant for example is a great open-source tool to create a virtual environment (based on Virtualbox for example) to test the deployment of a software on any specific operating system.

Docker, Podman and Buildah can be used to do the same thing in a container environment while Minikube, Kind, MicroK8s can be used to do it in a local Kubernetes cluster.

Depending on the use case, we have today multiple approaches to easily introduce testing in an IaC project to be proactive on potential issues instead of being reactive. Build your own images with Packer (optional) An IaC project is usually composed of a “base” profile regarding the computed resources. A base profile or a common profile is a list of roles that the automation tool needs to apply on any compute resources to ensure consistency across resources.

This base profile can be composed of the configuration of the root password, the NTP servers, the SMTP servers, the deployment of the monitoring tools, etc…

Sometimes, when it comes to improving the IaC project by reducing the time to spin up a new machine, the base profile becomes a problem. One known option to improve this is to reduce the base profile by creating a custom base image of an operating system.

To do that in a programmatic way, an open-source tool named Packer can be used. Packer is an open source tool, developed by HashiCorp, to create identical machine images for multiple platforms from a single source configuration. The images created can then be uploaded and used in the Cloud or by any on-premise virtual orchestrator platform.

Packer can be used locally by Vagrant to generate a virtual machine based on a specific custom image definition and test locally a piece of the IaC project. Definitely a good combination to improve the deployment of any custom resources.

This step is obviously optional and depends on the infrastructure requirements.

Emulate Cloud services locally

Emulating Cloud services locally is important to reduce the cost of an IaC project. Instead of generating an entire environment to test a piece of code, some tools can be used to emulate locally some Cloud services before testing the code in production.

For the AWS users, a great project named LocalStack has been developed and is, today, really powerful to test Terraform or Ansible code locally. LocalStack can be easily deployed on a local virtual environment (virtual machine or container) to mock many AWS endpoints.

For GCP users, the gcloud command has some experimental features to emulate limited endpoints for now.

Next?

This article is an opinionated presentation of an infrastructure as a code architecture to easily and correctly start a project. There are probably thousands of other ways to do this and this architecture can probably be improved, so feel free to share your ideas in the comments!

For more information:

- Semantic versioning definition

- Semantic-release tools to help automatic release

- Conventional commits

- Localstack with Terraform and Docker for running AWS locally

About the authors

Hicham Bouissoumer — DevOps

Nicolas Giron — Site Reliability Engineer (SRE) — DevOps